As organizations scale their media presence, they often assume that clarity is a downstream effect of repetition. The logic is familiar: if enough content is published across enough channels with sufficient consistency, the message will eventually land. Scale is treated as a production and distribution problem, and meaning is expected to stabilize through exposure.

In practice, the opposite occurs. Media scale does not resolve ambiguity. It magnifies it. When meaning has not been clearly defined, deliberately constrained, and actively owned upstream, scaling content simply reproduces that ambiguity faster and more uniformly. What appears to be momentum is often the systematic amplification of unresolved decisions.

This distinction matters because most content failures are not caused by poor execution. They are caused by unstable meaning being asked to perform work it was never designed to carry.

Why is meaning assumed long before it is designed?

Most organizations believe they have meaning because they have language. They can point to positioning statements, value propositions, messaging frameworks, and strategy decks as evidence that alignment exists. These artifacts create confidence, but they often mask a more fundamental absence of agreement about what the organization is actually trying to do, for whom, and under which constraints.

Language without shared intent becomes interpretive rather than directive. Teams read the same words through the lens of their own incentives and responsibilities. Marketing emphasizes differentiation and reach. Product emphasizes capability and roadmap logic. Sales adapts framing to whatever moves a deal forward. Support becomes responsible for reconciling expectations after the fact.

At low levels of output, this interpretive spread is survivable. Experienced practitioners compensate through judgement. They add context in meetings, smooth inconsistencies in copy, and make local decisions that preserve coherence well enough. Meaning exists, but it is being actively maintained by people rather than structurally supported.

What changes when content reaches media scale?

Scale removes the human correction layer that once absorbed ambiguity.

As output increases, content is no longer crafted through close, contextual judgement. It moves through systems: templates, workflows, automation, reuse models, and increasingly AI-assisted generation. These systems do not resolve uncertainty. They operationalize it.

When upstream meaning is unresolved, systems faithfully reproduce that uncertainty across channels and time. The result is not overt contradiction, but gradual divergence. The same product begins to sound like it solves different problems depending on where and how it is encountered. Terminology remains consistent, but intent drifts.

This is the point at which confusion becomes infrastructural rather than incidental.

What is semantic drift, and why does it matter at scale?

Semantic drift describes the gradual misalignment of meaning as content moves through an organization’s ecosystem. It is not about tone, voice, or word choice. It is about what the content is implicitly claiming, prioritizing, or promising.

Drift occurs when meaning has not been sufficiently constrained. Without clear boundaries, language stretches to accommodate multiple audiences, use cases, and internal agendas. Over time, the original intent becomes diluted, not through error, but through accommodation.

At scale, semantic drift matters because it erodes trust and efficiency simultaneously. Internally, teams spend more time debating interpretation than making decisions. Externally, audiences encounter inconsistent signals that require extra effort to reconcile. The cost shows up as friction long before it appears in metrics.

Why does AI make meaning problems impossible to ignore?

AI does not introduce ambiguity. It reflects it.

When inputs are vague, AI produces outputs that are confidently vague. When priorities are unresolved, it blends them. When ownership is unclear, it fills gaps with plausible structure rather than explicit judgement. The result often looks polished while feeling hollow, not because the system is failing, but because it is faithfully reproducing the thinking it has been given.

In organizations where humans previously compensated for weak meaning, AI removes that buffer. It exposes the absence of decisions by scaling their consequences. What once felt like a manageable lack of clarity becomes visibly systemic.

Blaming AI for this outcome misses the point. The issue is not generation quality. It is meaning readiness.

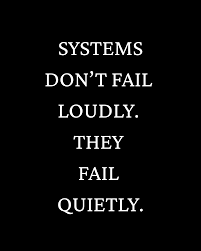

Why do content systems fail quietly instead of breaking?

They fail quietly because they continue to function.

Pages publish. Campaigns launch. Content calendars remain full. From an operational perspective, the system appears healthy. This is what makes meaning failures difficult to diagnose. There is no single point of collapse, only a gradual increase in friction, rework, and explanatory effort.

A content system is not defined by its tools. It is defined by whether meaning remains intact as content moves across teams, channels, and time. When meaning has not been designed as a shared constraint, systems do not collapse. They drift.

By the time performance metrics reflect the problem, the organization is no longer debating copy. It is debating what the product is actually for.

What needs to be in place before scale becomes an advantage?

Scale rewards constraint, not creativity.

Before content can scale effectively, meaning must be explicitly designed. This requires decisions, not documentation. At a minimum, organizations need a shared commitment to the problem they are solving, clear prioritization of who that problem is for, and explicit guardrails that define what the organization will and will not claim.

Ownership of meaning must also be clear. Not ownership of production or approval, but ownership of judgement. Without that, systems default to repetition rather than coherence.

When these conditions exist, scale becomes leverage. Automation reinforces clarity. AI accelerates rather than dilutes intent. Content systems carry meaning instead of distorting it.

Without them, media scale is just loud confusion, repeated with confidence.

Want to chat? calendly.com/sajwriter